Comprehensive data compiled from extensive research on duplicate claims prevention, fraud mitigation, and settlement administration efficiency

Key Takeaways

- Duplicate claims represent a multi-billion dollar problem – Poor data quality costs U.S. businesses $3.1 trillion annually, with duplicate records driving significant portions of this financial drain across legal and insurance sectors

- Traditional detection systems miss most duplicates – Only 38% of duplicate records are exact matches, meaning 62% of "soft duplicates" slip through standard detection methods, creating costly inefficiencies in claims payout processes

- Financial losses compound rapidly – Each duplicate healthcare record costs $1,950 to resolve, while a single California workers' comp case saw duplicate medical records cause a $58,000 overcharge

- AI-powered detection delivers measurable results – Automated deduplication reduces duplicate records by 30-40% within the first few months, with leading organizations achieving duplicate rates as low as 0.14%

- False Claims Act enforcement intensifies – FCA settlements and judgments exceeded $6.8 billion in fiscal year 2025, creating urgent pressure on claims administrators to prevent fraudulent and duplicate submissions

- Data entry remains the primary vulnerability – 92% of patient identification errors tied to duplicate records occur during initial registration, highlighting the need for real-time validation systems

Understanding the Landscape: The Scale of Duplicate Claims and Duplicate Records

1. False Claims Act settlements and judgments exceeded $6.8 billion in fiscal year 2025

The U.S. Department of Justice reported over $6.8 billion in settlements and judgments, reflecting continued enforcement against fraudulent claims including duplicate submissions. This substantial recovery underscores the regulatory environment claims administrators face when managing settlement distributions and legal payouts. Source: U.S. Department of Justice

2. 2. Whistleblowers filed 1,297 qui tam lawsuits in fiscal year 2025

These whistleblower-initiated cases represent sustained vigilance around claims fraud and duplicate submissions. Organizations handling high-volume payouts must implement robust detection systems to avoid becoming enforcement targets. Source: U.S. Department of Justice

3. Healthcare-related False Claims Act recoveries exceeded $1.8 billion in fiscal year 2023

The healthcare sector continues to represent the largest source of fraudulent claims, including duplicate billing and submission schemes. This concentration demonstrates why claims administrators across industries must prioritize fraud mitigation and duplicate detection capabilities. Source: U.S. Department of Justice

4. Total False Claims Act recoveries since 1986 now exceed $85 billion

This cumulative figure represents decades of enforcement against fraudulent claims, establishing clear precedent for aggressive prosecution of duplicate and false submissions. Settlement administrators benefit from platforms with built-in compliance and verification capabilities. Source: U.S. Department of Justice

The Real Financial Impact: Quantifying Losses from Duplicates

5. Poor data quality costs U.S. businesses $3.1 trillion annually

Harvard Business Review and IBM Research quantified this staggering figure, with duplicate records representing a significant portion of these losses. For claims administrators, preventing duplicate payouts directly protects settlement funds and preserves organizational resources. Source: Landbase

6. Organizations lose an average of $13 million per year due to poor data quality

Gartner research establishes this benchmark for average organizational losses from data quality issues including duplicates. Claims teams without automated detection systems face substantial financial exposure with each settlement campaign. Source: Landbase

7. Each duplicate healthcare record costs approximately $1,950 to resolve

Black Book Research quantified the per-incident cost of duplicate record resolution, demonstrating how quickly costs compound across large claim populations. Settlement administrators handling thousands of claimants face potential exposure in the millions without proper detection. Source: HealthLeaders

8. Healthcare facilities spend over $1 million annually fixing duplicate data issues

This operational burden diverts resources from core activities and extends settlement timelines. Platforms like Talli address this challenge through automated verification and fraud mitigation built directly into the payout workflow. Source: TechTarget

9. A single California workers' comp case saw duplicates cause a $58,000 overcharge

This real-world example demonstrates how duplicate medical records can create massive cost impacts on individual claims. The case involved Medical-Legal Provider Reimbursement Rate calculations that traditional detection systems failed to catch. Source: Insurance Thought Leadership

10. Patient identification errors from duplicates cost $1.7 billion annually in malpractice

Beyond direct duplicate costs, downstream errors create substantial liability exposure. Claims administrators must consider total cost of ownership when evaluating detection solutions. Source: Veradigm

Key Statistical Indicators: Patterns of Duplicate Claims

11. Only 38% of duplicate records are exact matches

Standard duplicate detection systems designed around exact matching miss the majority of problematic records. This fundamental limitation explains why organizations continue experiencing duplicate-related losses despite having detection tools in place. Source: Insurance Thought Leadership

12. 62% of duplicates are "soft duplicates" that evade standard detection

These near-duplicates with slight variations in formatting, spelling, or data entry represent the largest detection challenge. AI-powered systems using semantic analysis prove essential for catching these elusive duplicate patterns. Source: Insurance Thought Leadership

13. Healthcare organizations experience 15-16% duplicate rates in large systems

This translates to approximately 120,000 duplicate records per 1 million patient records in healthcare databases. Claims administrators handling mass tort or healthcare-related settlements face similar exposure without proper controls. Source: Landbase

Leveraging Advanced Analytics: AI-Powered Detection

14. Automated deduplication reduces duplicates by 30-40% within first months

Organizations implementing AI-powered detection solutions see rapid improvements in duplicate rates, demonstrating the technology's immediate impact on data quality and payout accuracy. Source: Sapien.io

15. 37% of organizations now use AI for data quality improvement

While adoption grows, the majority of claims administrators still rely on manual or rule-based systems that miss soft duplicates. Early adopters gain significant competitive advantages in fraud prevention and operational efficiency. Source: Landbase

16. Survey-reported AI use among lawyers has increased since 2023

This 173% increase in legal AI adoption signals industry-wide transformation. Claims administrators partnering with AI-enabled platforms position themselves ahead of regulatory expectations and client demands. Source: Talli Settlement Campaigns

17. 57% automation rate achieved in insurance claims processing with 99.9% accuracy

Leading organizations demonstrate that high automation rates and accuracy are achievable simultaneously. This benchmark establishes expectations for modern settlement administration platforms. Source: Talli Settlement Campaigns

18. A 1,000-page document requires 499,500 comparisons for complete duplicate analysis

The computational complexity of thorough duplicate detection exceeds human capacity, making AI-powered systems essential for large-scale claim processing. Manual review simply cannot match this level of thoroughness. Source: Insurance Thought Leadership

The Role of Data Quality: Prevention at the Source

19. 92% of patient identification errors occur during initial registration

This finding from TechTarget research demonstrates that data entry represents the critical intervention point. Platforms with real-time validation during initial submission prevent duplicates before they enter the system. Source: TechTarget

20. B2B contact information decays at 70% per year

Claimant data faces similar decay rates, creating continuous duplicate risks as outdated information generates new submissions. Ongoing data hygiene proves essential for maintaining clean reconciliation and reporting. Source: Data Axle

21. 94% of businesses suspect their customer data contains inaccuracies

This near-universal acknowledgment of data quality concerns underscores why claims administrators cannot assume their databases are clean. Proactive detection remains necessary regardless of data source quality. Source: Landbase

22. 86% of healthcare professionals have witnessed errors from duplicate records

The prevalence of duplicate-related errors across healthcare demonstrates how commonly these issues affect real outcomes. Claims administrators handling healthcare-adjacent settlements must account for this systematic data quality challenge. Source: Veradigm

23. Sales teams lose 550 hours annually per representative on inaccurate data

While this statistic addresses sales contexts, claims teams face similar productivity drains. Manual duplicate checking and resolution consumes resources that could be directed toward claimant service and case management. Source: Landbase

Operational Strategies and Technology Integration

24. 29% of organizations don't know their duplicate error rate

Nearly a third of organizations operate without this critical data quality metric, making improvement impossible. Establishing baseline duplicate rates represents the essential first step toward systematic reduction. Source: AHIMA

25. 40% of business initiatives fail due to poor data quality

Duplicate records contribute significantly to initiative failures across industries. Settlement campaigns built on unreliable data face similar risks of missed deadlines, compliance issues, and claimant dissatisfaction. Source: Data Axle

26. The 1% duplicate rate benchmark is achieved by 22% of organizations

AHIMA established this industry standard, with approximately one in five organizations meeting the target. This achievable benchmark demonstrates that significant improvement is possible with proper tools and processes. Source: Landbase

Measuring Success: Benchmarks and Best Practices

27. Children's Medical Center Dallas reduced duplicates from 22% to 0.14%

This case study demonstrates that dramatic improvement is achievable with sustained focus. The organization maintained this world-class standard for over five years, proving long-term sustainability. Source: DataLere

28. Documentation requirements reduce class action participation by 27.3 percentage points

Complex documentation creates barriers that interact with duplicate detection—claimants may submit multiple times when uncertain about initial submission status. Streamlined processes with real-time confirmation reduce both duplicate submissions and abandonment. Source: Talli Settlement Campaigns

Taking Action on Duplicate Detection

The statistics paint a clear picture: duplicate records and duplicate claims represent a massive, persistent challenge that traditional detection methods cannot adequately address. Organizations that continue relying on exact-match systems will miss 62% of problematic duplicates, exposing themselves to financial losses averaging $13 million annually.

AI-powered platforms offer proven solutions, delivering 30-40% duplicate reduction within months of implementation. Leading organizations have achieved duplicate rates as low as 0.14%, demonstrating that world-class performance is attainable with the right technology and processes.

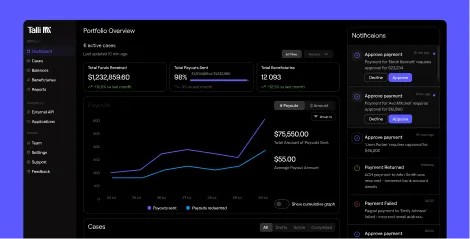

For claims administrators managing settlement distributions, platforms with built-in fraud mitigation, KYC verification, and real-time validation provide essential protection. Talli's AI-driven platform addresses these challenges directly, offering complete fund segregation, OFAC screening, and audit logs that help teams handle settlement fraud while maintaining compliance and visibility.

Frequently Asked Questions

What constitutes a duplicate claim in legal payouts?

A duplicate claim occurs when the same claimant submits multiple times for the same settlement, either intentionally or through confusion about submission status. Duplicates also arise when data entry variations create multiple records for a single individual, such as spelling differences, address changes, or inconsistent formatting.

How significant is the financial impact of undetected duplicates?

Undetected duplicates cost organizations an average of $13 million annually according to Gartner research. Individual incidents can range from $1,950 per duplicate record resolved to $58,000 in overcharges from a single case with duplicate medical records.

What detection methods prove most effective for duplicate claims?

AI-powered detection systems using semantic analysis outperform traditional exact-match methods significantly. While exact matching catches only 38% of duplicates, advanced systems identify the 62% of "soft duplicates" with formatting variations, spelling differences, and other subtle distinctions.

How does Talli's platform address duplicate claims challenges?

Talli's AI-driven payment platform incorporates fraud mitigation, KYC verification, OFAC screening, and audit logs directly into the payout workflow. Real-time validation during claimant onboarding prevents duplicates at submission, while automated detection identifies existing duplicates before disbursement.

What duplicate rate should claims administrators target?

AHIMA establishes 1% as the achievable industry benchmark, with 22% of organizations meeting this target. World-class organizations have achieved rates as low as 0.14%, demonstrating that dramatic improvement is possible with proper tools and sustained focus.

.svg)

.svg)